Beyond the Hype

In this series of blogs , DDATS team will be evaluation popular AI toolset

3/4/20262 min read

AI tools are everywhere right now. Every week there is a new model, platform or automation framework.

But most conversations focus on productivity and not cost. Very few focus on how these tools actually perform in real workflows and what they cost to run.

At DDATS, my team and I are particularly interested in how AI can be adopted across the UK public sector and the typical .gov technology stack. One of the ways we approach this is by applying tools to practical use cases.

Recently I tested a simple but representative scenario from the Bid Advisory space.

Bid teams often deal with peaks and troughs in workload. I wanted to create an AI driven workflow that could understand documents, extract key information and generate structured summaries in a repeatable way.

This type of workflow is often described as a Gen-Agentic architecture, where a sequence of processing steps produces a structured output.

Testing the Microsoft Route

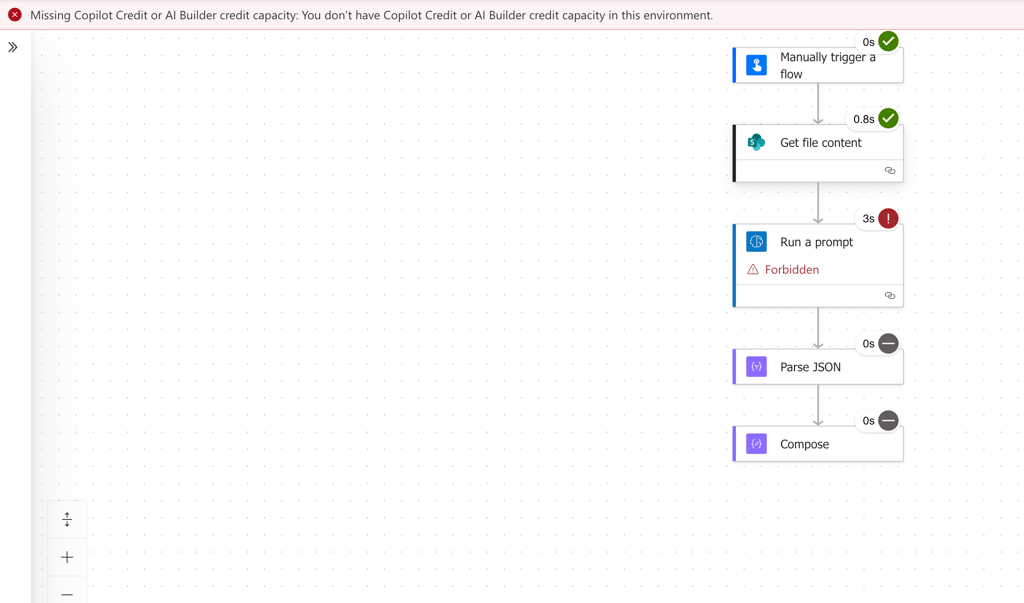

Given the prevalence of Microsoft across government, the first approach used Power Automate.

It was easy to assemble an AI flow and test prompts. For basic automation the platform works well.

However, limitations appeared quickly when processing multiple document types. Power Automate relies heavily on predefined actions, which makes complex document pipelines harder to implement.

The more interesting observation came when the workflow was executed.

The automation layer was not the constraint. The model processing layer required usage credits.

A Developer Approach

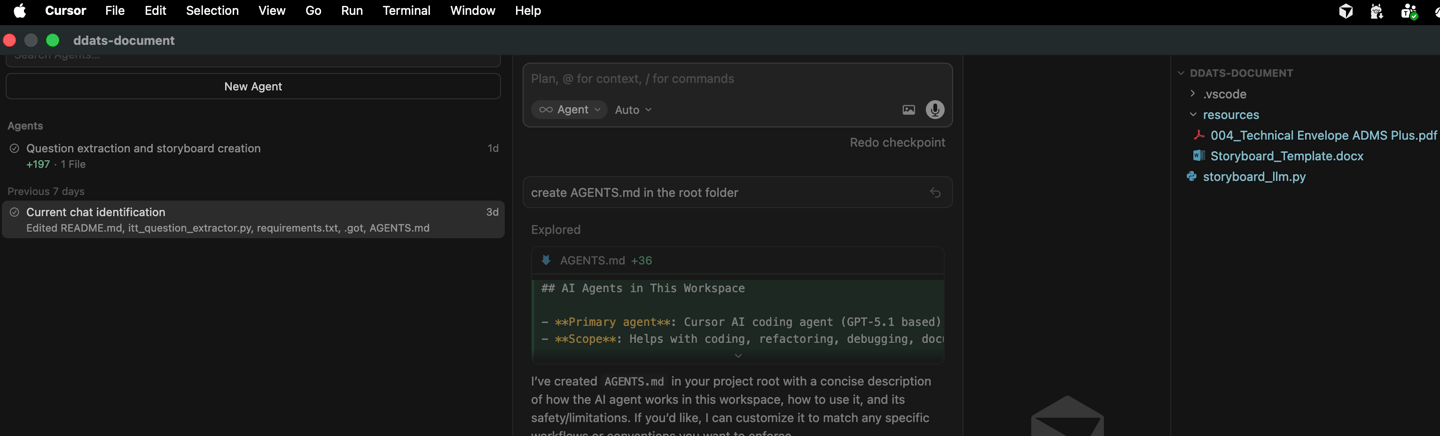

To test a more flexible architecture, I rebuilt the same workflow using Cursor, an AI enabled development IDE.

Cursor quickly generated Python requests and prompts. From an engineering perspective, the orchestration of document reading and prompt generation was straightforward.

However, when scaling the workflow to handle multiple documents, the architecture again relied on the OpenAI API credits for parsing and model processing.

Once again the same pattern appeared.

The tools are getting better. The orchestration is getting easier.

But the model layer is where the cost sits.

A Consistent Architectural Pattern

Across both approaches the conclusion was clear.

Whether the entry point is low code automation or developer tooling, the cost and scaling considerations ultimately sit in the model processing layer.

Can AI Tools Pass the 3-Step Pricing Test?

One observation from this exercise is that AI pricing is not always as transparent as it appears.

Many tools look inexpensive or even free at the interface level. The real cost sits behind the scenes in model usage, tokens and API consumption.

The UK Competition and Markets Authority (CMA) recently launched a campaign encouraging businesses to present pricing clearly.

Looking at the current AI tooling landscape through that lens, it is not obvious that many pricing models would pass the same test.

For organisations, particularly in the UK public sector, this matters. AI procurement decisions cannot be based only on capability. They must also consider cost transparency, predictability and value for money.

This is why AI tooling assessments and procurement maturity frameworks will become increasingly important.

What Comes Next

The next step in this exploration is testing local models such as LLaMA, which could run on local infrastructure.

For the public sector, this introduces a different set of trade-offs around cost, infrastructure and data control.

At DDATS, these experiments help us build a clearer view of how organisations can adopt AI responsibly, technically and economically.

Because the real question is no longer whether AI will be used.

It is whether organisations are getting real value for money from it.